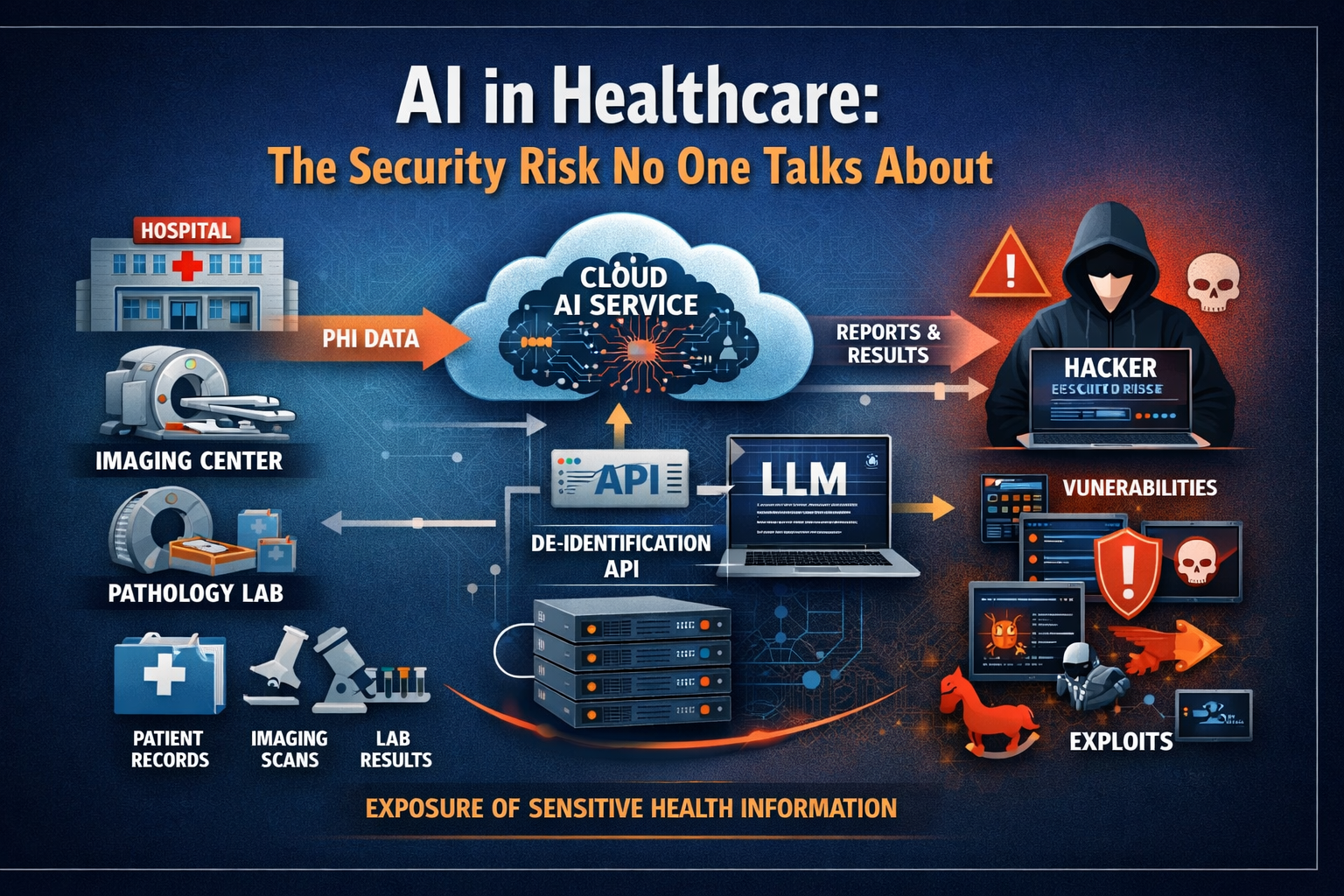

The path PHI travels through cloud AI workflows — and the attack surface that path creates.

When Anthropic recently disclosed that its latest frontier model, Claude Mythos Preview, is unusually capable at finding and exploiting software vulnerabilities — and launched Project Glasswing specifically to begin hardening critical infrastructure against that capability — the announcement was treated mostly as a general AI safety story. Security researchers took notice. Most healthcare leaders did not.

That is a mistake. And I want to explain precisely why.

I have spent close to thirty years in healthcare IT, the last two decades working directly with DICOM systems, enterprise PACS, and clinical imaging infrastructure. I know how these environments are built, how they are connected, and — critically — how rarely the people making AI adoption decisions have a clear picture of where protected health information goes once it leaves the facility. That gap has always carried risk. In the current AI environment, it carries considerably more.

What Anthropic Actually Disclosed

In its own red team technical report, Anthropic described Mythos Preview as "unusually strong" at both finding and exploiting software vulnerabilities. The report notes that the model has independently identified thousands of additional high- and critical-severity vulnerabilities, and that it has uncovered major flaws across important software and infrastructure through Project Glasswing. The report further states that Mythos Preview largely saturates earlier benchmarks for vulnerability exploitation — meaning the bar those benchmarks were designed to measure has effectively been cleared.

Anthropic is not being reckless in disclosing this. They are being responsible. Project Glasswing is a genuine attempt to get ahead of a capability that now exists and will continue to propagate across the frontier model ecosystem. The disclosure is, in that sense, reassuring about Anthropic's intentions.

It is not reassuring about the security posture of cloud-based healthcare AI architectures.

If frontier models are now capable of surfacing and exploiting serious software weaknesses at scale, what does that mean for every healthcare organization sending PHI into a cloud-based LLM workflow? The question is not whether the model is from Anthropic or anyone else. The question is whether every system in your AI chain — APIs, middleware, inference endpoints, logging infrastructure, credential stores — can withstand the class of threat that now exists.

The Fundamental Architecture Question Healthcare Is Not Asking

The conversation in healthcare AI has been almost entirely focused on what models can do. Can the model summarize a clinical chart? Generate a radiology report draft? Read a pathology narrative? Flag an anomaly in an imaging study? Answer a clinical question faster than a human reviewer?

Those are legitimate questions. But in healthcare, they are not the first questions.

The first question is: where does the PHI go? The second is: who touches it after it leaves the facility? The third is: what happens when any system in that chain has a vulnerability, a dependency failure, a credential compromise, a misconfigured logging rule, or an exposure event? HHS's own cloud guidance is explicit on this point — using cloud services does not transfer or reduce the regulated entity's obligation to understand and manage those risks. The Covered Entity remains responsible.

The dominant framing in healthcare AI right now — that cloud AI adoption is primarily a vendor selection and BAA execution exercise — is not adequate. It was not adequate before frontier models became sophisticated threat actors in the vulnerability exploitation space. It is considerably less adequate now.

Why Imaging Environments Are Especially Exposed

The exposure concern is more serious in radiology, cardiology, and pathology than it is in most other clinical domains, for a reason that gets very little attention in the AI discussion: sensitive patient information in imaging is rarely confined to a discrete structured field.

In DICOM environments, PHI can exist in tag headers, embedded metadata, study descriptions, accession details, workflow context, attached reports, and — in some common deployment scenarios — in the image content itself as burned-in annotations. When a healthcare organization says it is "sending imaging data to a cloud AI," the scope of what is actually transmitted can be significantly broader than what was intended or reviewed.

From a security standpoint, this matters because it expands the consequence surface of any breach or exposure event. A vulnerability that allows an attacker to access transmitted imaging data does not just expose one structured field. It potentially exposes the full clinical context of every study in the pipeline.

HIPAA requires safeguarding all ePHI that is created, received, maintained, or transmitted — including ePHI that exists in DICOM headers, imaging metadata, and workflow context. Organizations that have not inventoried where PHI lives in their imaging data before routing it to cloud AI services are not in a position to assess their actual exposure.

The De-Identification API Problem Nobody Talks About

There is a specific architectural pattern in healthcare AI that deserves much more scrutiny than it typically receives: the cloud-based de-identification API that sits between a clinical data source and a downstream AI platform or LLM workflow.

These APIs are commonly marketed as secure intermediaries. The pitch is that they strip PHI from imaging studies or clinical documents before that data reaches the AI system, reducing the compliance exposure of the downstream workflow. That is a useful function if the de-identification is technically complete and verifiable. In practice, these APIs can become the most sensitive components in the entire architecture, precisely because they sit directly in the path of regulated data as it is received, transformed, and transmitted.

OWASP's API Security Top 10 identifies the specific risk categories that apply here: broken object-level authorization, broken authentication, broken property-level authorization, security misconfiguration, and unsafe consumption of upstream or downstream API responses. Any one of these failure modes in a de-identification API creates a direct path to PHI exposure. NIST's SP 800-228 on API protection for cloud-native systems makes clear that these protections must be maintained throughout the full API lifecycle — not evaluated once at procurement and then forgotten.

Healthcare organizations evaluating or deploying these APIs should be asking their vendors for detailed architecture documentation, penetration testing results, breach response procedures, and subcontractor data handling disclosures. A polished product demo and a signed BAA is not a substitute for that due diligence.

A BAA Is Not a Security Architecture

This point needs to be stated directly, because the BAA has become a kind of organizational security theater in healthcare AI discussions.

A Business Associate Agreement matters. It is legally required. It establishes contractual accountability for how PHI is handled by a vendor. None of that is trivial. But a signed BAA is not the same thing as a secure architecture. It does not minimize data exposure. It does not prevent PHI from reaching systems and vendors that do not need it. It does not substitute for understanding the data flow, the access model, the retention practices, the logging infrastructure, or the incident response obligations of every system that handles the data. HHS has stated explicitly that cloud use must comply with HIPAA beyond the contract itself.

A breach that results from a misconfigured API, an unpatched dependency, or a credential compromise in a vendor's cloud environment does not become less serious because there was a BAA in place. The Covered Entity's obligations to patients — and its notification obligations under the Breach Notification Rule — remain fully intact.

The question is not whether the vendor signed the agreement. The question is whether the architecture actually keeps PHI out of places it never needed to go in the first place. Those are not the same question, and in healthcare AI, they are rarely treated as such.

What Better Governance Looks Like

None of this is an argument against AI adoption in healthcare. AI will create real clinical and operational value. LLMs will improve specific workflows in meaningful ways. The goal is not to slow adoption — it is to ensure that adoption does not create a larger, more fragile, and more attractive target for the class of threat that now exists.

In practical terms, that means applying a more demanding governance standard to any workflow that requires PHI to leave the clinical environment. Specifically:

Treat cloud PHI transmission as a major governance decision, not a convenience feature. If a proposed AI workflow requires patient data to leave the hospital or lab environment, that decision should require documented justification, architecture review, and explicit executive sign-off — not just a vendor contract.

Prefer on-premises processing and pre-transmission de-identification wherever technically feasible. When PHI can be de-identified before it leaves the facility — using a verifiable, tested, and auditable process — that is almost always the more defensible architecture. It minimizes the attack surface and reduces the consequence of any downstream exposure event.

Apply the same vendor scrutiny to API security that you apply to EHR security. Demand architecture documentation. Ask for penetration test results. Understand data retention and subcontractor handling. Review logging and incident response procedures. These are not unreasonable requests — they are the minimum standard for any system handling regulated data.

Stop measuring AI readiness only by model capability. The question of whether a model produces useful outputs is important. It is not sufficient. An organization needs to understand what data it is exposing, to which systems, under what access controls, with what retention and logging rules, and with what incident response plan — before it deploys.

The Broader Obligation

The disclosure from Anthropic is not an indictment of AI. It is evidence of a trajectory that healthcare leaders need to understand and plan for. Frontier models will continue to become more capable, including at tasks that have direct security implications for complex software environments. Healthcare AI architectures that were designed without serious attention to that threat surface are not suddenly more defensible because the vendor has a good reputation.

Healthcare organizations have a legal obligation to protect patient data. They also have an ethical obligation to their patients to take that protection seriously — not as a compliance exercise, but as a genuine security posture. In the current environment, that means asking harder questions about AI architecture than most organizations are asking today.

The issue is no longer only whether the AI can produce an answer. The issue is whether the organization had to build a larger, more fragile, and more attractive target to get that answer. That is not progress. And from where I sit, the people bearing the cost of that trade-off are not the technology vendors — they are patients.

- Anthropic, Claude Mythos Preview Red Team Technical Report and Project Glasswing documentation

- HHS/OCR, Cloud Computing Guidance and HIPAA Security Rule requirements for ePHI in cloud environments

- OWASP, API Security Top 10 (2023 edition)

- NIST Special Publication 800-228: Guidelines for API Protection for Cloud-Native Systems (2025)