The API layer is not just a connector — it is the gateway into the entire healthcare AI workflow, and often its most exploitable boundary.

AI in Healthcare Has a Security Problem No One Wants to Say Out Loud

How frontier AI models capable of finding and exploiting software vulnerabilities at scale change the risk calculus for every healthcare organization sending PHI to a cloud AI workflow.

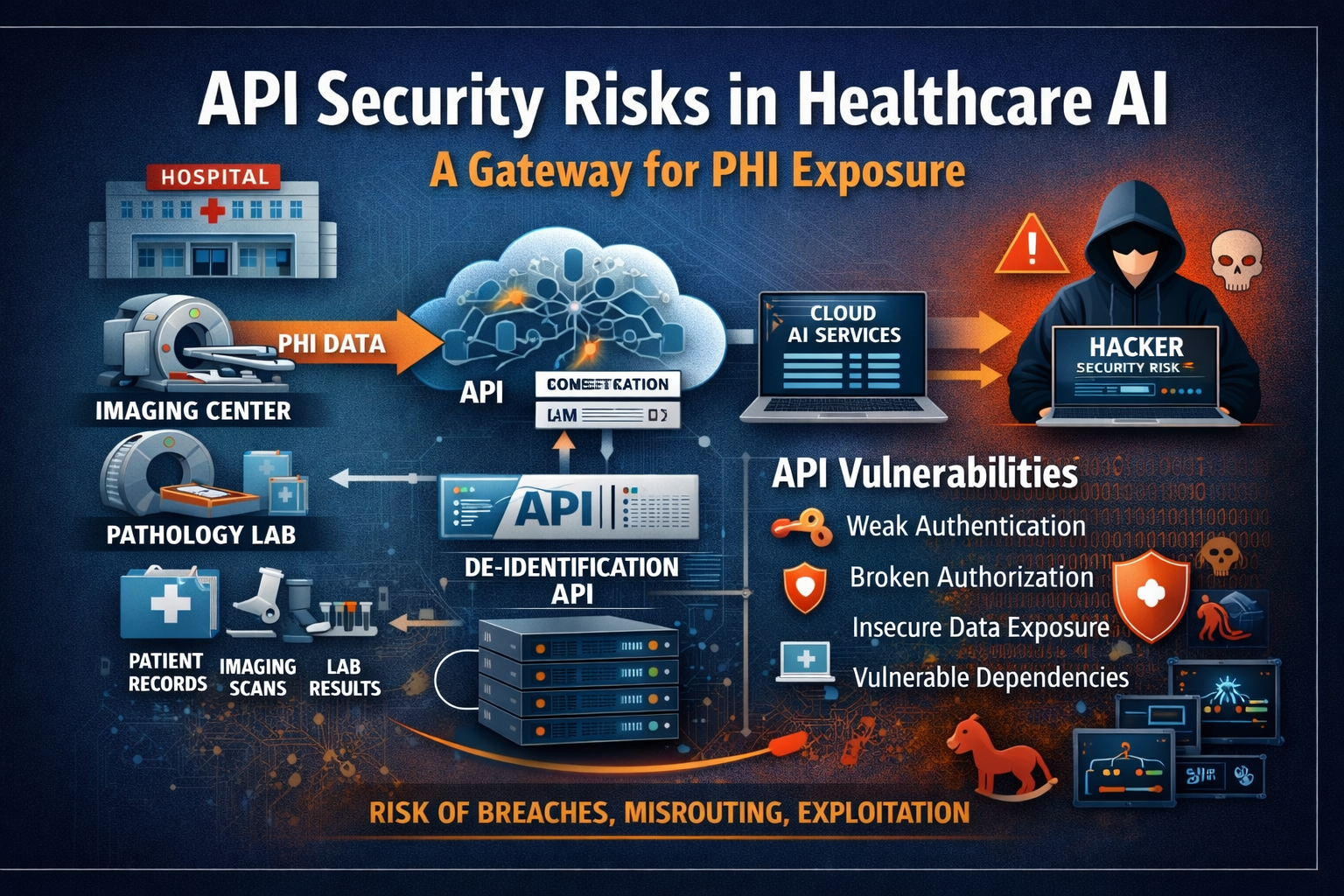

Read Part 1 →The first piece in this series examined what Anthropic's disclosure about Claude Mythos Preview means for healthcare organizations broadly — and why the dominant framing around cloud AI adoption (model capability plus a signed BAA) is insufficient for the threat environment that now exists. This piece goes one layer deeper, to the specific component that I believe is the most underexamined security boundary in healthcare AI today: the API layer.

Not the model. Not the cloud provider. The API that sits between the hospital, imaging center, or pathology lab and the AI platform — and that, in most modern healthcare AI architectures, handles far more than a simple data relay.

What These APIs Actually Do — and Why That Matters

The function of these APIs has grown significantly as healthcare AI workflows have matured. In the architectures I most commonly encounter, a cloud API is responsible for receiving imaging studies, pathology content, or related clinical documents from the facility; applying de-identification logic before the data is processed by an AI model; routing the processed data to the appropriate downstream AI service or LLM; and returning AI-generated outputs — annotations, findings summaries, report drafts, structured results — back to the originating system.

That is a substantial set of responsibilities. And it means the API is touching not just a structured text payload, but potentially metadata, DICOM headers, accession context, workflow identifiers, prior results, attachments, and returned AI outputs that later have to be matched and routed back to the correct patient record. HHS's own cloud guidance is unambiguous: when ePHI is created, received, maintained, or transmitted in any cloud workflow, the Covered Entity's compliance and security obligations remain fully intact. The presence of an API layer — and a vendor contract — does not change that.

An API that handles de-identification, AI inference routing, and the return of clinical outputs occupies the most sensitive position in the entire workflow. It receives regulated data, transforms it, transmits it to systems outside the facility's direct control, and returns derived clinical content back into the care environment. Each of those transitions is a trust boundary. Each is a potential failure point. And in healthcare, a failure at any one of them has direct consequences for patient privacy, data integrity, and clinical decision-making.

The OWASP Categories That Apply Directly to Healthcare AI APIs

OWASP's API Security Top 10 is the most widely recognized framework for categorizing how APIs fail in practice. Five of the ten categories apply with particular force to healthcare AI API architectures.

Broken object-level authorization is the most common API vulnerability class overall, and it is especially dangerous in multi-tenant healthcare environments. It occurs when an API does not consistently enforce that the requesting entity is actually authorized to access the specific object — in healthcare terms, the specific study, patient record, or result — it is requesting. In a well-designed architecture, tenant A cannot query or retrieve data belonging to tenant B. In practice, object-level authorization failures are found regularly in production API environments, and when they involve healthcare data, the consequence is not an abstract data leak — it is unauthorized exposure of specific patients' protected health information.

Broken authentication remains a dominant failure mode in API environments despite being well-understood. In healthcare AI workflows, the specific risks include overly broad API keys that grant more access than the integration requires, tokens with inadequate expiration policies, credentials stored in configuration that can be exposed through logging or environment leakage, and integrations that rely on shared secrets without per-tenant segmentation. A compromised credential in a healthcare AI API does not require a sophisticated exploit to cause serious harm — it requires finding and using a key that has broader access than it should.

Broken object property-level authorization describes a subtler failure: the API correctly validates that the requestor can access a given record, but returns more fields or attributes than the requestor should be able to see. In healthcare, this maps directly to the concept of minimum necessary access under the HIPAA Privacy Rule. An API that returns the full DICOM study object — including all header fields, embedded metadata, and attached reports — when the AI workflow only needed specific image data is creating unnecessary exposure, regardless of whether the requestor was legitimately authenticated.

Security misconfiguration covers a wide range of failure modes: permissive CORS settings, unnecessary HTTP methods left enabled, default credentials not changed, TLS not enforced, error responses that leak implementation details, and rate limiting not applied. These are not exotic failures. They are the mundane reality of fast-moving development environments where security configurations are set once and rarely revisited. NIST's SP 800-228 specifically calls out the need to maintain API security configurations throughout the full operational lifecycle — not just at initial deployment.

Unsafe consumption of APIs describes the risk that arises when an API blindly trusts data returned from a downstream service without validating its structure, content, or provenance. In a healthcare AI workflow where AI-generated outputs are returned through an API and routed back into clinical systems, this is not a peripheral concern. An API that accepts and routes AI outputs without validating their structure and provenance creates an injection point — both for corrupted data and, in a worst case, for content designed to exploit downstream systems.

What NIST SP 800-228 Says Healthcare Organizations Need to Do

NIST finalized Special Publication 800-228 — Guidelines for API Protection for Cloud-Native Systems — in June 2025. The publication is directly applicable to the healthcare AI API architectures described in this article, and its core message is worth stating plainly: API security in cloud-native systems is not a one-time configuration task. It is an end-to-end lifecycle discipline spanning architecture, implementation, deployment, and ongoing operations.

That means API security requirements must be built into system design, not patched in after deployment. It means authentication and authorization controls need to be evaluated and tested continuously, not just at procurement. It means logging and monitoring need to be configured to actually surface anomalous patterns, not just to satisfy an audit checkbox. And it means that when a dependency in the API supply chain is found to have a vulnerability, there needs to be an operational process for detecting and remediating that vulnerability before it is exploited.

Most healthcare organizations evaluating cloud AI vendors are not asking about any of this. They are asking whether the vendor has HIPAA experience and whether they will sign a BAA. That procurement posture is not aligned with what NIST has determined API security in cloud-native systems requires.

How Vulnerable Are These APIs, Practically Speaking?

My assessment is that many are materially more vulnerable than the organizations buying and deploying them understand, and some are likely exploitably exposed right now. That is not a claim about any specific vendor — it is a structural observation about API ecosystems.

The most common API security failures are ordinary: weak or overly broad credentials, insufficient tenant separation, authorization checks that pass at the route level but not at the object level, overly verbose API responses, stale dependencies with known vulnerabilities, endpoints that were created for development or testing and never decommissioned, and monitoring gaps that mean anomalous access patterns go undetected. None of those require a sophisticated attacker. They require someone who knows where to look.

And that is precisely the context in which Anthropic's disclosures about Claude Mythos Preview are relevant. Anthropic's red team technical report describes the model as capable of conducting autonomous end-to-end attacks on small-scale enterprise networks, producing working proof-of-concept exploits without human steering, and identifying thousands of high- and critical-severity vulnerabilities across operating systems, browsers, and critical software through Project Glasswing. The model largely saturates earlier benchmarks for vulnerability exploitation — a measure of how far this capability class has advanced.

The implication for healthcare API security is not that Anthropic's model is being used to attack healthcare systems. It is that a capability that previously required a skilled human specialist to deploy is now within reach of much broader use. The time between "a weakness exists in this API" and "someone has found and weaponized that weakness" is shortening. That matters enormously for environments where the data in play is among the most sensitive and most valuable that exists.

The return path carries risk too. When AI-generated annotations, findings, or report drafts are returned through an API back into the clinical environment, the integrity of that data matters directly to patient safety. An API that does not validate the provenance and structure of returned AI outputs creates an injection point — for corrupted data, for misrouted results, and in a worst case, for content that exploits downstream clinical systems.

In healthcare, a data integrity failure on the return path is not just a security incident. It is a patient safety event.

The Questions Healthcare Organizations Must Start Asking

The procurement and due diligence conversations healthcare organizations have with cloud AI vendors need to change. The questions below are no longer nice-to-have — they are the minimum standard for any vendor whose API will handle PHI, supposedly de-identified imaging data, or returned clinical outputs.

- How is tenant separation enforced at the object level, not just the route level?

- What is the scope and lifecycle of API credentials — how are they issued, rotated, and revoked?

- What data fields are returned in API responses, and how is minimum-necessary access enforced?

- What is logged, where is it stored, and how long is it retained?

- Which subcontractors or downstream services receive or process the transmitted data?

- How are AI-generated outputs validated and routed before being returned to the originating system?

- What is the dependency management and vulnerability remediation process?

- What is the mean time to remediation for high-severity API vulnerabilities?

- What penetration testing has been performed on the API layer, and when?

- What is the incident response procedure if the de-identification logic produces a failure?

These are not unreasonable questions. Any vendor operating a production healthcare AI API should be able to answer them clearly and specifically. A vendor that responds to these questions with generalities, deflections, or a pointer to their SOC 2 report is telling you something important about how seriously they treat the security of the data you would be entrusting to them.

The Architecture Implication

The most defensible posture for healthcare organizations is not to accept these API risks and try to manage them at procurement. It is to minimize the attack surface at the architectural level — to reduce the amount of PHI and de-identification responsibility that needs to cross an external API boundary at all.

That means treating on-premises de-identification as the default design goal, not an optional feature. It means keeping PHI transformation inside the facility's control boundary whenever it is technically feasible to do so. It means being skeptical of architectures in which de-identification is delegated to a cloud intermediary, because the intermediary itself becomes a high-value target — one that, by design, receives the most sensitive data before any protective transformation has occurred.

In healthcare AI, the API may be the most important security boundary in the entire architecture. If that boundary is weak, the capability of the model behind it is largely irrelevant. The promise does not hold if the gateway cannot be trusted.

The healthcare organizations that will navigate this environment most successfully will be the ones that treat API security not as an IT implementation detail but as a core governance question — one that gets the same attention as model selection, vendor contracting, and clinical validation. The threat environment has changed. The governance posture needs to change with it.

- Anthropic, Claude Mythos Preview system card, red team technical report, and Project Glasswing documentation

- HHS/OCR, Cloud Computing Guidance and HIPAA Security Rule requirements for ePHI in cloud environments

- OWASP, API Security Top 10 (2023 edition)

- NIST Special Publication 800-228: Guidelines for API Protection for Cloud-Native Systems (June 2025)